Chapter 4

FRAMEWORK FOR EXPERIENCE-BASED VIRTUAL PROTOTYPING

This chapter discusses the approach to developing interactive virtual prototypes that enable experience-based design. First, there is a brief overview of how experience-based design can be combined with virtual prototypes by incorporating scenarios of tasks performed by end-users.

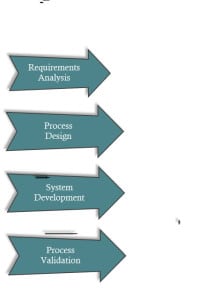

The second part of the chapter proposes a procedure to incorporate task-based scenarios into the virtual facility prototyping development process. The process is divided into four parts- requirements analysis, process design, process implementation and development and finally, process validation. (An overall experience-based virtual prototyping system plan is attached in the Appendix A) The virtual prototyping process was implemented on various project cases to test and refine the overall process.

In process design, system architecture is proposed for the development of interactive virtual prototypes. Based on this architecture and the requirement analysis, the experience-based design review process is defined and the model content and interactive media to be used for virtual prototyping are determined.

In prototype development and process implementation, workflows to transfer model content from design authoring and Building Information modelling applications to the interactive programming environment are discussed. Project cases are used as examples to demonstrate lessons learned and refinement of the above procedures. Procedures to develop interactivity are discussed and implementation of the scenarios framework to simulate in the prototype is presented. An approach to develop reusable model content is also discussed.

Finally, in Process Validation, the project cases used to develop the EVPS process are reviewed and a summary of lessons learned is discussed. Apart from that, informal interviews with domain-experts to validate the procedure for development are also discussed.

4.1 METHOD TO DEVELOP EXPERIENCE-BASED VIRTUAL PROTOTYPES

Experience-based design can be combined with virtual prototyping by allowing end-users to simulate scenarios of tasks that they perform in their facilities. The approach for developing the experience-based virtual prototyping system is to look at the design review process of healthcare facilities through the lens of experience-based design using interactive virtual prototypes as the tool. Using game engine based applications allows scenarios of tasks to be embedded in the virtual prototypes and can help enhance the design review process especially for end-users.

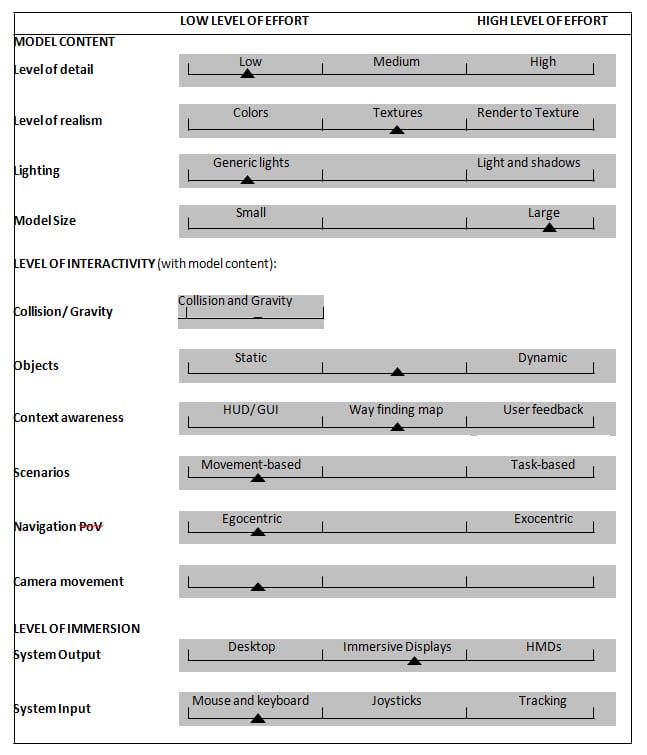

A method to develop virtual prototypes with embedded scenarios is shown in Figure 4-1.The process is adapted from requirements analysis methods used in software engineering (Robertson and Robertson 1999) and virtual reality system development methods (Sherman and Craig 2003). The detailed virtual prototyping process is adapted from Wilson (1999) and discussed at the end of this chapter. The following sections discuss the four overarching steps of Requirements Analysis, Concept and Process Design, Development and Process Validation.

Figure 4-1. Experience-based virtual prototyping procedure

4.2 REQUIREMENTS ANALYSIS

In requirements analysis, a framework for mapping scenarios with the spaces involved and the level of detail required is proposed for use. This is an exploratory research phase that helps define a virtual prototyping procedure to extract end-user experience and tacit knowledge of work tasks that are undertaken in a healthcare facility. Requirements analysis consists of firstly identifying stakeholders and defining their overall goal for using the interactive virtual prototype for design review.

“A requirement is something that a product must do or a quality that the product must have.” A requirement exists either because the type of product demands certain functions or qualities, or the end-user wants that requirement to be part of the delivered product (Robertson and Robertson 1999). Requirements can be functional or non-functional. Functional requirements are something the product must do to be useful within the context of the customer’s domain and non-functional requirements are properties or qualities that the product must have to enhance the product.

Requirements gathering for design can sometimes be difficult as the end-users are required to imagine what their needs for the future facility would be. The idea of using a prototype is give people something that has the appearance of reality and is real enough so that potential users can think of requirements that might otherwise be missed (Robertson and Robertson 1999).

4.2.1 Identifying Stakeholders

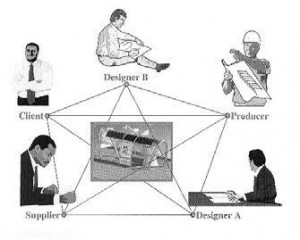

There are various stakeholders who play a key role in the design process of healthcare facilities including project teams- designer, engineers, contractors, board of trustees, financiers, vendors, suppliers, patients, caregivers, staff , community partners and donors (The Center for Health Design 2010). Stakeholders bring their own expertise, perspectives, and objectives to the design task (Kirk and Spreckelmeyer 1988). For the purpose of this study, stakeholders in the healthcare design process are divided into two categories: “Designers” of healthcare facilities from the architects, engineers and construction (AEC) personnel and “End-Users” from the healthcare context (see Figure4-2). End-users of healthcare facilities can range from Care Receivers, Care Givers, and Facility Managers. Care Receivers comprise of patients, their families and visitors. The Care Givers include doctors, nurses, healthcare staff and home health aides. Finally the healthcare facility managers encompass all the administration staff and maintenance personnel.

Figure 4-2. Stakeholders in the healthcare design process: Designers and End-Users.

4.2.2 Investigating and Documenting Scenarios

Scenarios can be documented as a list of tasks performed by end-users of the facilities being designed. Documentation methods for scenarios could include concept mapping based on interviews and focus group discussions with end-users and subject matter experts involved in the design and where possible, observations during design review of the facilities. Methods such as concept mapping and focus groups with end-users are proposed to identify and extract scenarios of tasks that end-users may perform in their facilities. Semi-structured interviews conducted with the project stakeholders and end-users of healthcare facilities provide an understanding of how they use the facility and the tasks they perform in them.

Healthcare facilities scenarios vary depending on the user, the type of task being performed and the issues they address. For instance, in a particular scenario, a nurse would receive a signal to respond to a patient call at the nurse’s station. The nurse would then navigate to the defined space, and finally perform a task within that space. Another scenario could involve facility management personnel who need to perform an inspection of the HVAC equipment installed. The worker navigates to the required area to identify the equipment’s location and then performs a task to complete the inspection.

The potential for use and inclusion of scenario-based design in interactive virtual prototypes is discussed in Chapter 2. The following are some methods explored to document scenarios of activities that were tested on healthcare related studies.

4.2.2.1 Envisioning experiences as scenarios of tasks

A pilot study was conducted in July 2010 in the Immersive Construction Lab (ICon Lab) at Penn State to review the design of a pharmacy in a medical office building (Leicht et al. 2010). Observations during design review and informal discussions with the pharmacists and owner’s representative helped establish the type of tasks performed in the pharmacy and the way different spaces are used in the facility.

During design review session with the end-users- the pharmacist’s and the owner’s representatives, it was observed that the pharmacists would often envision scenarios of tasks that they would perform in the pharmacy (see Figure 4-3). They would talk about how they would go about administering prescriptions for their customers.

One of the issues discussed during the design review (Figure 4-4) was the need to extend the partition walls between the pharmacy counters to provide more privacy for the customers ordering their prescriptions. Other concerns that were raised included determining the location of trash cans for hazardous medical waste, and ensuring that the cabinets for narcotic medicine had ample storage space and that they could be locked.

During and after the design review session, the two pharmacists were interviewed to gain better understanding of the tasks performed and their exact locations within the pharmacy. Based on their feedback, a task-based scenario was constructed as follows:

Role: Pharmacist

Scenario: Filling a prescription order Tasks:

a. Get Order from customer (Pharmacy Counter)

i. Urgent- needs to be done right away (Work Station)

ii. Placed in the pipeline (Computer system)

b. Fill prescription (Work Station)

i. Requires narcotic drugs (Narcotics cabinet)

ii. Not on the shelves (Get from stock in Receiving Area)

c. Complete order and place it in storage cabinet

d. Give customer the prescription (Pharmacy Counter)

4.2.2.2 Concept mapping to extract scenarios

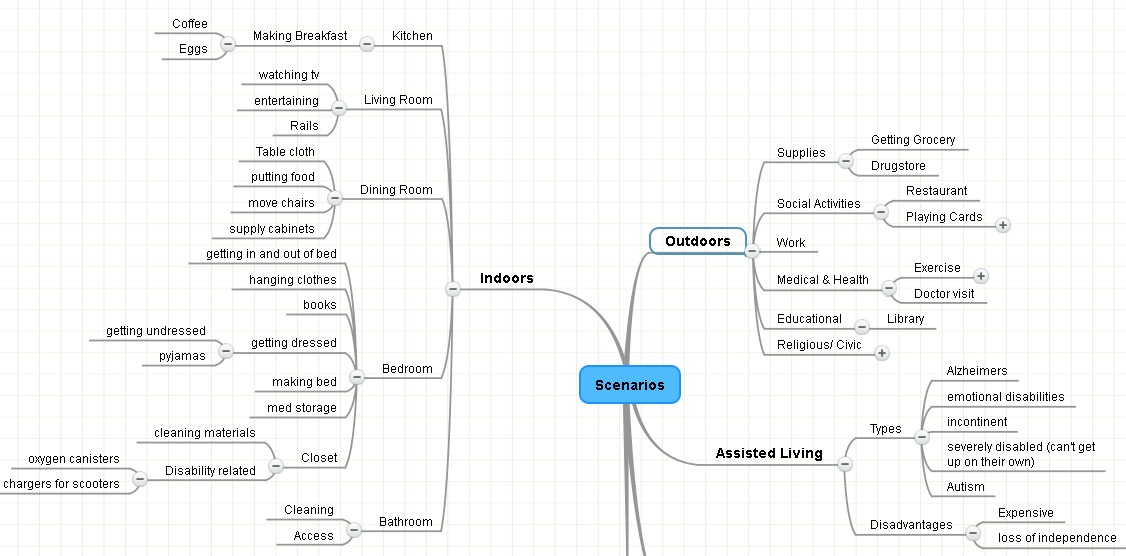

Another way to elicit scenarios from end-users is through the use of concept maps. A concept map (or mind map) is a drawing and text combination that attempts to represent information the way that our brain stores it by making associations and linking each new piece of information through something we know. Mind maps are beneficial for requirements work as they help spot connections in the information that the users put forth.

(Robertson and Robertson 1999). Knowledge elicitation through concept mapping enables the end-users to build up a representation of their domain knowledge (Crandall et al. 2006). Concept maps can help develop a hierarchical morphology structure that frames scenarios and helps inform model content.

Figure 4-5 shows an example for concept mapping to elicit and document scenarios from end-users. The concept mapping method was tested in a healthcare related independent housing facility for the elderly. The formal documentation of scenarios for EVPS development and implementation using concept mapping and focus groups is discussed in more detail in Chapter 5.

Figure 4-5. Example of eliciting and documenting scenarios

4.2.2.3 Scenarios Categories

After scenario documentation, scenarios can be categorized based on whether they are related to way finding in a facility or performing specific detailed task. Scenarios are categorized based on the nature of tasks being performed and defining the steps involved in them in detail.

This helps to identify the objects and their corresponding behaviors that will be required for the simulation of each scenario.

Table 4-1 shows scenario categories and defines the various tasks that can be simulated based on the level of detail. Scenarios are described with examples of type of healthcare facilities can benefit the most from them. It also identifies the participants involved and the objects required for each type of scenario.

| SCENARIOS | Description | Healthcare Facility Examples | End-Users/ Stakeholders | Objects involved | LoD for geometry |

| Movement- based | Way-finding and navigating through the facility from one location to another | Large hospital facilities- going through long corridors to reach desired location |

Patients, Visitors, HC staff, nurses, doctor, new staff, Facility managers |

Facility to navigate Mini-map,FPC (First person Controller), Notes and alerts in HUD | Low-High |

| Task-based | Accomplishing a specific task that could involve relocating, moving certain objects or people to desired location | Nurse moving IV to patient Bed, locating electric outlet near patient bed and using it. |

Nurses, HC staff, Doctors, Visitor, Patients, Patient’s family, Facility managers, Repair personnel |

Facility, Objects or other avatars, FPC (First Person Controller), Notes and alerts in HUD Hand movements of FPC* |

High-Very high |

| Process-based | Accomplishing a combination of tasks and movements to undergo a certain process. | Doctor performing operation and moving patient from one area to the other; Admitting patient in the ER and taking for tests or to patient room; Home health aide assisting elderly with daily life activities |

Doctors, Nurses, HC Staff, Visitors, Facility Management Repair Personnel |

Facility, Objects or other avatars, FPC (First Person Controller), Notes and alerts Hand movements of FPC* |

Medium – Very high |

| Spatial Organization | Examining the position of objects to ascertain if the layout is efficient, facilitates flow, meets requirements, follows anthropometric rules | HC staff examine whether there is enough space and correct layout of equipment to perform certain tasks; equipment and furniture is relocated to improve layout |

Designers, Engineers, Nurses, Doctors, HC staff, Facility Managers, Patients* |

Facility, Objects or other avatars, FPC (First Person Controller), Notes and alerts in HUD |

Medium – Very high |

| 3rd person view | Looking at and examining space from various locations and through eyes of other user roles | View of patient room from bed, view and experience going through MRI scan*, view of facility on a wheel chair |

Designers, Engineers, Nurses, Doctors, HC staff, new staff Medical students Facility Managers, |

Facility, Objects or other avatars, FPC (First Person Controller), Notes and alerts in HUD |

Medium – High |

| Inquiry-based | Retrieve information and data associated with certain objects | Designers and Facility managers inspect facility and retrieve Building related information (BIM); HC personnel inquire about certain equipment in the facility |

Designers, Engineers, Facility Managers, HC staff,* new staff* Medical students* |

Facility, Objects BIM data FPC (First Person Controller), Notes and alerts in HUD |

Low – Very high |

| * Optional | |||||

Table 4-1. Scenario categories based on Level of Detail (LoD) required.

Categorization of scenarios based on their type can help identify level of detail, model content and interactivity required. Most importantly, this would also help determine the level of detail (LoD) required for modeling the digital content (facility, objects, avatars) in various authoring tools, to be able to simulate these scenarios effectively. For instance, a way finding scenario that requires a hospital visitor to walkthrough one end of the facility to another may not need the LoD that will be required for detailed tasks that take place in a fixed location such as a scenario where the nurse needs to check a patient‘s blood pressure.

4.2.3 Framework of scenarios

For requirements analysis, a framework for mapping scenarios with the spaces involved and the level of detail required is proposed for use (see Figure 4-6). The categorization and analysis of the documented scenarios helped develop a framework for structuring scenarios of tasks that end-users can perform in the virtual environments. This framework was initially developed to represent modelling needs and evolved through repeated testing on smaller healthcare related facility projects such as patient rooms, small operating suites, independent housing for elderly, medical laboratories and pharmacies.

Based on the scenario framework, several use scenarios can be identified, developed and documented for use during the virtual prototyping system development. The developed scenario framework can be leveraged to identify specific spaces and objects that need to be modelled as well as any additional object and user interface representations that will be essential for depicting the scenario. For instance, a patient emergency scenario identified may require quick patient transport from the hospital entrance to a specific location within the Emergency Department (ED) to provide critical care to the patient. This scenario has multiple forms of information that translate as a list of specifications to include in the experience-based virtual prototype that the end-user reviews. Based on the scenario, the specification elements could include location and path information shown in an abstract mini-map, temporary or moveable objects within the facility (e.g., patient bed, doors that open, and other elements that may not be included in the facility model) and representation of people involved in the scenario (e.g., patient and patient transporter’s avatars).

The scenario framework facilitates structured organization of the scenarios in a hierarchical object tree setup and enables a formal representation of specifications for development of the experience-based virtual prototypes.

4.3 EVPS DESIGN PROCESS

In the second phase of development, the design process of the EVPS proposes the system architecture and lays down the approach for translating specifications derived from requirements analysis into interactive virtual prototypes.

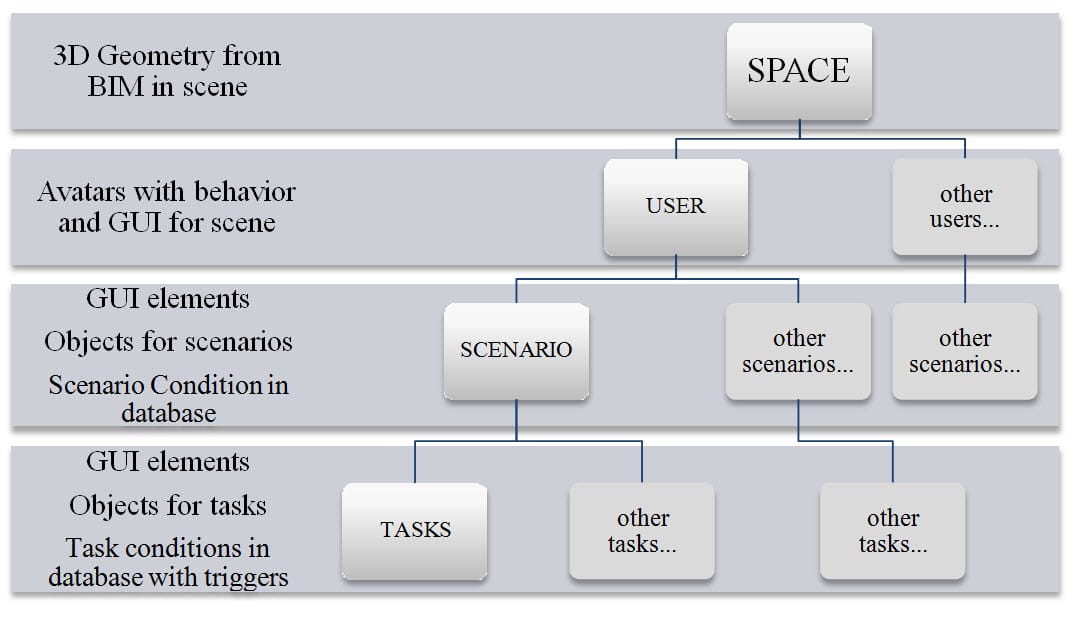

4.3.1 System Architecture

The system architecture for embedding scenarios in interactive virtual prototypes primarily consists of three components- the element library, the scenario engine and the user scene. The scenario engine is the main component of the EVPS application as it combines the task-based scenarios with geometric data and user input in the virtual environment. The element library is associated with other applications, databases and libraries. The user scene displays the virtual prototype and obtains feedback through the user input. The system architecture for the EVPS application is shown in Figure 4-7. Within the application, the 3D Element Library consists of three types of geometric models: the space (facility) models, the object (equipment) models and the avatar (user role) models. While the facility and equipment/ object models are obtained from a geometric database, the user role models are retrieved from the Avatar Object library. The Scenario Engine allows for the addition of scenario and task tracking scripts by attaching various objects to these scripts from the Scripting Library. The User Scene of the EVPS application contains the 3D rendering module and a GUI widget that displays the virtual healthcare facility prototype scene along with the objects and user roles on the user’s screen or output device. The objective is to allow the user to navigate through the space, interact with various objects and perform specific tasks and scenarios within the virtual facility prototype dynamically. This generic system architecture could be implemented in various game engine environments, but the specific structure of the data files and formats will vary depending on the game engine.

Figure 4-7. System Architecture of Experience-based Virtual Prototyping System.

4.3.1.1 Element Library

The element library functions as a repository for 3D geometric data of the facility including healthcare equipment and other objects that are required to be displayed in the virtual environment. Since most of the 3D geometric data is authored in various BIM authoring tools, the application needs to be interoperable with these tools such that all the data is stored in file formats that are easily exported from authoring tools and imported in the EVPS application. The element library communicates with the BIM database to get relevant 3D geometric data of the facility that will be required for design review within the virtual environment. Apart from 3D geometric data, real-time rendering requires additional attribute information regarding texture, material and lighting. Other important attribute information required during real-time walkthroughs of a facility in a virtual environment is collision detection on the wall, floor, ceiling or other similar elements within the facility.

In addition to geometric and attribute information, certain objects also have inherent behaviors that are included in the element library. For instance all the door elements in the facility could be animated to slide or swing open depending on the type of door and direction of hinge.

Healthcare equipment objects such as the patient bed could be animated so that they are configured for the user (patient) to sit or lie on them. The element library combines the 3D geometric data obtained from a BIM authoring application with all the additional behaviors described above such as attributes, physics and animations associated with various elements of a healthcare facility. The element library also contains information regarding the avatars of the user roles used in the scenario-based design review of healthcare facilities in the virtual environment that the element library will store 3D geometric data for the avatar representation based on the user role along with behavior, features, functions and constraints associated with each type of user role.

4.3.1.2 Scenario Engine

The implementation of scenarios for the purpose of interactive design review in a virtual environment takes place in the scenario engine. The scenario engine links various behaviors, scenario and task tracking scripts from the scripting library to the 3D geometric objects and model in the space. The organization of this information is based on a hierarchical data structure developed as a scenario framework wherein each design review space of the healthcare facility can be reviewed by various user roles. These user roles range from AEC design professionals and facility managers, to the end-users such as nurses, patients and other healthcare staff. Since every user would perform a distinct task within the space and have a different agenda for design review, the functions and features afforded to the user role chosen within the virtual environment are different. For instance, nurses might be interested in knowing if they are able to carry out certain duties like placing a particular piece of patent monitoring equipment near the patient’s bed in a convenient manner. However, facility managers might be more interested in checking the location and ease of access for various air filters that may need to be replaced in a fan coil unit.

Therefore, each user role should have a different Graphical User Interface (GUI) that can be customized for that particular user.

The GUI displays a distinct set of scenarios, which are further broken down into a series of tasks.

The functions performed by the scenario engine are as follows:

– Load appropriate healthcare space or facility model from element library in the user scene.

– Based on the space chosen, receive user input of the role in healthcare facility design and then load the first person controller (FPC) or avatar with relevant behavior for the user role. The GUI elements with functions and features appropriate for the user role will be loaded based on the user role selection.

– Load scenarios from a number of available scenarios defined based on the user role. Display GUI elements, additional objects needed for the scenario, and the list of tasks that are performed during the scenario.

– Once a scenario is activated, keep track of the steps or tasks performed to complete that particular scenario by updating and retrieving scenario conditions from the behavior scripting library.

4.3.1.3 User Scene

The user scene is the medium of communication or access point between the users and the EVPS. As the user’s connection to the virtual prototype, the user interface affects the design of the virtual prototype itself (Sherman and Craig 2003). A User Interface is part of the application with which a user interacts in order to undertake his or her tasks and achieve his or her goals (Stone et al. 2005).

4.3.2 Story boarding the Graphical User Interface

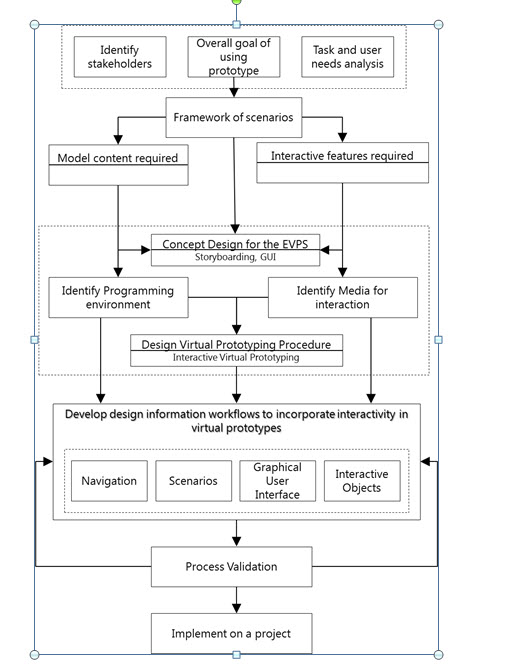

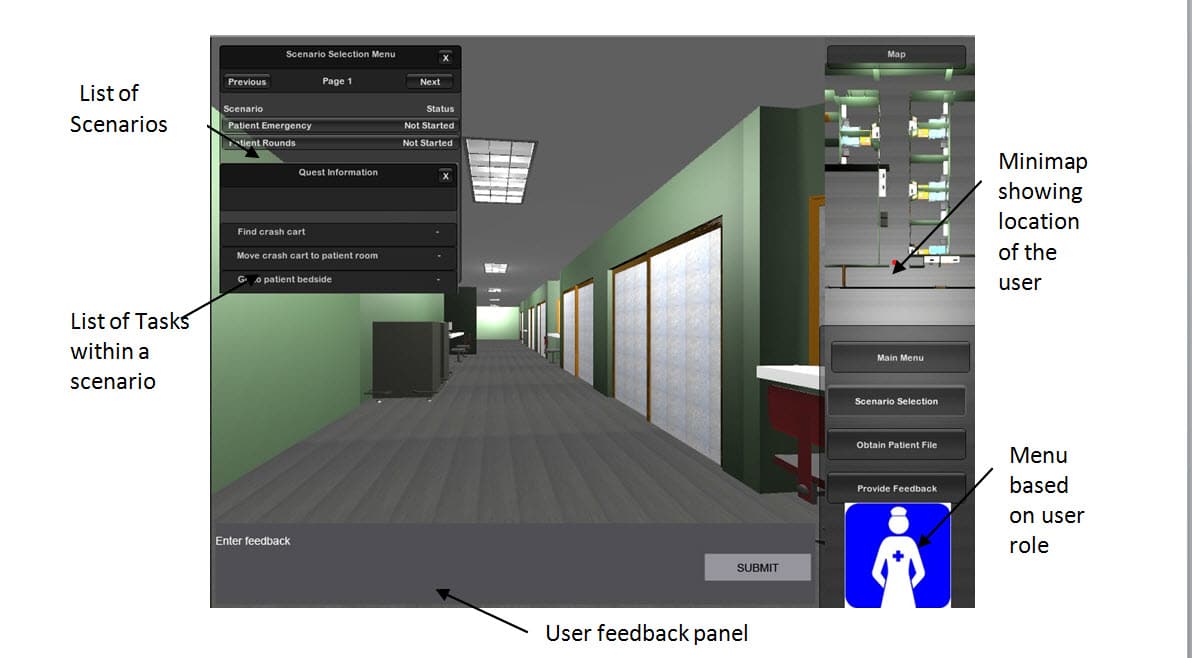

The Graphical User Interface (GUI) depicts to the user features and widgets that are embedded in an application. The GUI for each project can be custom designed based on the scenarios and model content required. The EVPS application concept was designed using storyboards to allow scenarios to be loaded within the game environment as shown in Figure 4-8.

Storyboards are sequences of sketches or screen layouts that focus on the main actions and interactions in a possible situation. Storyboards take textual descriptions of task flows (such as scenarios) and turn them into visual illustrations of interactions (Stone et al. 2005).

Storyboarding is a valuable tool for conveying functionality of a proposed solution and also help in collecting requirements and generating feedback (Gruen 2000).

Figure 4-9 shows a sequence of story boards designed during conceptualization of the EVPS. Each storyboard consists of initially, the scene or the facility depicting particular healthcare spaces. Once a specific space is chosen, the GUI displays various roles within the healthcare facilities that can be loaded as interactive avatars. Lastly, having chosen the healthcare facility space and role, the EVPS application loads relevant GUI components with corresponding scenario information as well as furniture, fixtures and equipment.

4.3.3 Identifying Media for Interaction

The User Interface is made up of hardware and software design components. Hardware design components or interaction devices are the input and output devices. Software design components are generated by the computer system or the scenario engine. Based on the media identified for interaction, the scenario engine needs to be programmed to have the software components respond to the user input and represent on the chosen display output.

4.3.3.1 User Input media

Virtual prototypes can only be interactive if they are able to accept real time input from the user. There are many ways of getting user input information into the system that could include from simple mouse and keypad input to the use of more complex physical controls like wands, flying sticks, joysticks, data gloves and platforms. Virtual reality interfaces such as motion tracking and eye tracking are other passive methods that can be used to input the user’s location and orientation in the virtual facility prototype (Sherman and Craig 2003). Latency between user input and display response is the product of many system components – input devices, computation of world physics, graphical rendering routines and formats.

4.3.3.2 Output display media

Visual displays for virtual prototypes impact the application development based on the ease of implementing the particular system. The media used for display can range from simple desktop, handheld display and large screen projection display to the more immersive virtual reality based displays that include stereoscopic projection and head-mounted display systems. Similar to the input media, development of the EVPS needs to be in accordance to the chosen media’s requirements for resolution and compatibility with file formats for real-time rendering (Dunston et al. 2010; Sherman and Craig 2003).

According to a study by Nikolic (2007) and Zikic (2007), the best configuration to use for the evaluation of designed spaces depends on the purpose and context of use of virtual prototypes. Their study confirms the usefulness of having large screen and wide field of view due to the fact that they provide a scale reference and sufficient spatial information. However, in the context of end-user presentation, they suggest a useful and affordable combination to display a highly detailed and highly realistic model on small screens with a narrow field of view.

4.3.4 Interactivity Environment Selection

As discussed in the literature review chapter, game engine applications provide greater functionality compared to 3D modeling applications for embedding interactivity in virtual prototypes. Many game engines have been used in the AEC industry such as the XNA game engine for construction simulation (Nikolic et al. 2010) and architectural walkthroughs (Yan et al. 2011), C4Engine, Torque and Unreal Editor (Shiratuddin and Fletcher 2007). Even virtual worlds such as the Second Life platform have been used for interacting with facility prototypes. Earlier attempts to incorporate interactivity in virtual prototypes were done by creating custom applications making the development very cumbersome. However, the use of most commercially available applications also includes high learning curve, labor intensive development and high costs.

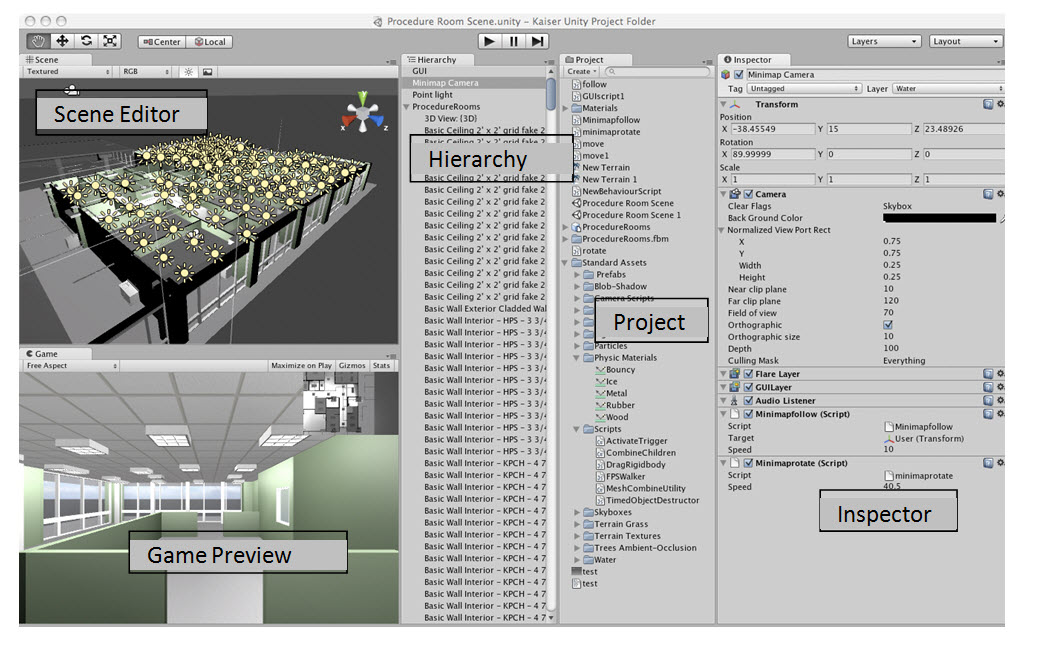

Based on review of several real-time rendering engines, the Unity game engine was considered a feasible option for the development of the Experience-based Virtual Prototyping System (EVPS) application. The Unity game engine was chosen since it has a fast rendering environment, a robust feature set that allows for customization, is affordable, and has a relatively easy interface with drag and drop ability making it easy to learn and use (see Figure 4-10). The game engine can also be used in both the Mac and PC operating systems and it uses the JavaScript and Just-In Time (JIT) compilation within the C++ mono library. For the purposes of physics and rendering, it uses the Nvidia PhysX physics engine, OpenGL and DirectX for 3D rendering and OpenAL for audio. There are also various workflows for conveniently transferring geometric data from BIM and CAD authoring applications such as Autodesk Revit, Google SketchUp and Autodesk 3DS Max to the Unity Game engine. This helped develop the level of information transferred such as textures as well as other intelligent information attached to the Building Information Model.

Within each Unity project folder, there is a default Assets folder that performs the function of the element library for the EVPS application and stores all the data associated with the healthcare facility. The user scene file is located in this Assets folder and includes the main levels as well as different zones and spaces of the healthcare facility. Moreover, all elements that are ever used in the user scene including game objects, prefabs (objects with attached behavior scripts), textures and other components along with their behavior scripts are stored in the assets folder. These elements, referred to as assets in the Unity game engine can be reused from one project to another. Within the Unity game engine interface, the elements stored in the assets folder are displayed in the Projects tab as shown in Figure 4-10. Digital models of the facility and other object geometry are stored as assets within the projects database.

The next section outlines the preliminary development process, focused specifically on importing building information models and digital model content in the game engine environment.

4.4 EVPS DEVELOPMENT PROCESS

One of the objectives of the research is to streamline the EVPS development process so that the amount of time and effort required to create interactive virtual prototypes of healthcare facilities can be reduced. The steps for developing information exchange workflows to develop the EVPS are as follows:

– Identify modeling and interaction tools

– Identify and list file format that can be exchanged between the applications

– Test exchange of model information using different file formats

– Note any issues and challenges with data exchange

4.4.1 Design Information Workflows

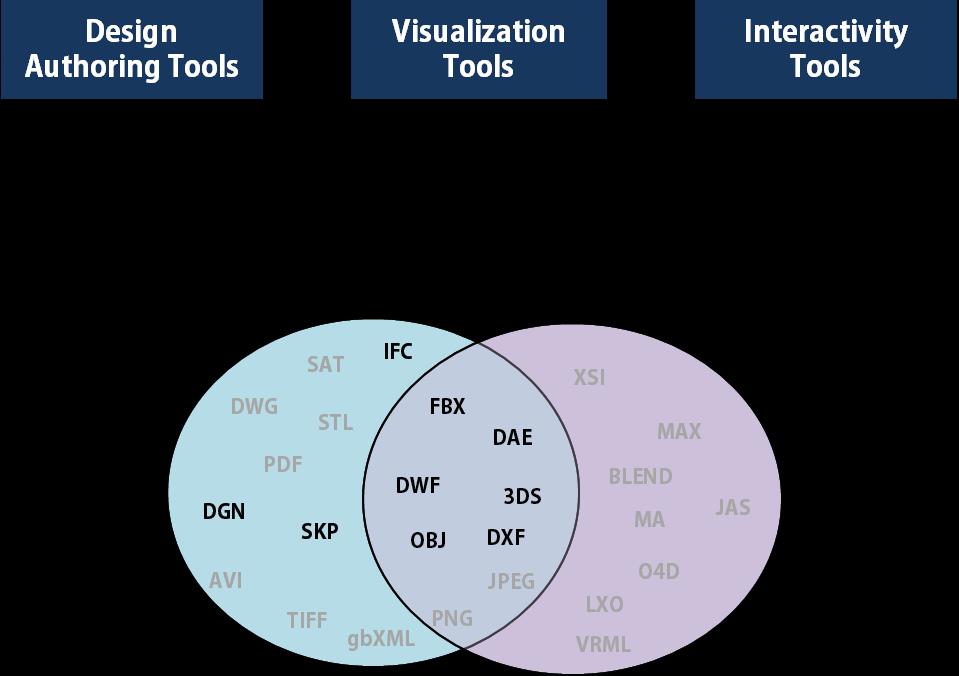

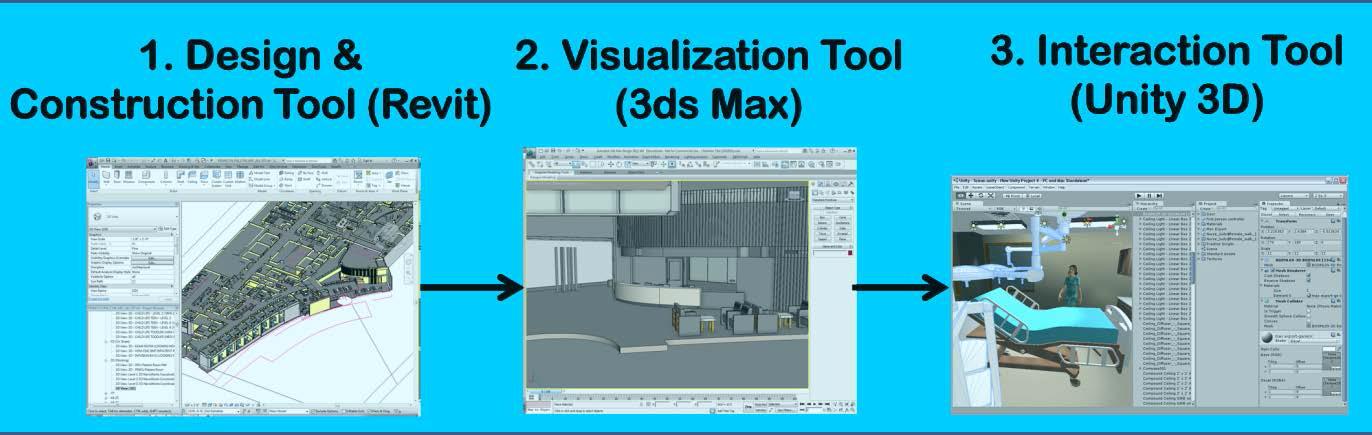

The system and process design phase helps identify the ideal modeling and software implementation tools based on the goals and objectives established for developing the EVPS. While some projects may need to be modeled from scratch, others may already have existing highly detailed models used from the project team. Once the modeling application is chosen, it is important to identify the file formats (see Figure 4-11) that can be exchanged between the chosen software applications.

Figure 4-11. Interoperable file formats to transfer geometry content between tools.

The intent of testing various workflows is to transfer as much model content as possible into the Unity game engine to ensure that limited amount of modeling is needed within it.

Experiments with transferring model data using different file formats tested the amount of data that comes through various applications and noted any issues or missing information. The tests identify the most efficient workflow and record this design information exchange process from 3D modeling software to interactive tools.

Various workflows were tested to best utilize 3D content from different BIM authoring tools for use in the Unity game engine. The advantage of using existing BIM authoring tools, such as Revit Architecture was that it allowed the use of existing building information models for the development of the interactive virtual prototypes. For this purpose, workflows to transfer model content from building information modeling tools such as Autodesk Revit along with other visualization and 3D modeling tools such as Autodesk 3D Studio Max, and Google SketchUp.

Another benefit of directly exporting models into Unity was that design changes made to the model in native authoring tool (such as Autodesk Revit) could simply be exported again with changes that would be automatically updated in Unity.

4.4.2 Information exchange challenges

During workflow development, certain interoperability issues were encountered while importing models from Revit into Unity which were primarily related to textures, lighting, and overall organization of the model hierarchy. Interoperability is the ability of several systems, identical or completely different, to communicate without any ambiguity and to operate together. Maldovan et al. (2006) and Dunston et al. (2010) note that the lack of interoperability inhibits development of virtual mock-ups. Data are lost when models are transferred between applications and when models are sent to immersive virtual environments. Furthermore, depending on the type of display output, most applications do not support 3D stereoscopic visualization of models in real-time.

While 3D meshes of the lighting fixtures did transfer successfully into Unity, the lighting characteristics did not. Lighting for the spaces can be added within the Unity engine. Typically, a large amount of time is required in making the lighting as realistic as possible; hence successfully carrying through these characteristics can translate into potential time savings for the project team. One possible solution to this lighting issue could be incorporating the use of baked textures within the lighting workflow. By importing a facility model into 3D Studio Max and using render to texture for the objects, baked textures depicting the lighting effect can be transferred to the Unity model. However, this may also raise the issue of redoing this entire process whenever revisions are made to the design of the model in Revit before being transferred again to Unity.

4.4.3 Real-time rendering challenges

Building or facility models typically contain walls, ceilings and floors that partition space into rooms. The geometric content of these models comprises of 3D meshes, textures, and lighting attributes. Larger models have larger polygon count that requires more resources for rendering and can affect performance of the real-time simulation.

According to Funkhouser et al. (1996), visual realism with short response times as well fast and uniform rate is desirable in virtual simulation prototypes of facility models. The level of detail needed is quite high to purvey the sense of presence and realism comparable to the true constructed space (Nikolic 2007) ; Zikic, 2007). Sense of realism is low when response to user input is slow. Latency between user input and display response is the product of many system components – input devices, computation of world physic, graphical rendering routines and formats.

The higher the frame-rate (number of images displayed per second), the smoother (lagging is greatly reduced) the real-time images are presented. This allows users to experience the virtual prototypes of facilities to a greater level of immersion and interaction (Shiratuddin 2007). Occlusion culling for models where large portions of the models can be hidden or occluded by polygons in front of the user’s viewpoints can be used to improve frame rate.

4.4.4 Optimal information exchange workflow

It was found that the most efficient workflow for embedding interactivity in virtual prototypes was by importing files into Unity game engine as an FBX file format (see Figure 4- 12). One of the benefits of using a FBX file format was that when files were transferred within the Autodesk suite, it retained information pertaining to 3D meshes, texture and camera locations. However, when a FBX file format was exported into Unity, it lost any type of textural information associated with the model and the materials and textures had to be reapplied. Since the Unity engine had an extensive in-built library of textures and material, basic realistic textures can be applied within Unity with relative ease although it requires additional effort. Other workflows, particularly through other visualization applications, may not encounter this texture issue, although it has not been uncommon to have similar issues when moving from CAD / BIM authoring applications to interactive game engines.

4.5 INCORPORATING INTERACTIVITY IN EVPS

Interactive virtual prototypes can prove to be effective design communication tools between the professionals and end-users by extracting domain specific tacit knowledge from both parties to create better understanding of the facility which further leads to better design.

Interactive features are important for end-users as it helps them relate what they are seeing in virtual environments to the real world (Wang 2002). Interactivity features not only enables end- users to interact with the virtual prototype during design review, but also gives the AEC professionals an opportunity to review the prototype through the roles and point of view of the end-users.

Interactivity is defined as the extent to which a user can participate in modifying form and content of a mediated environment in real time (Steuer 1995). The role of interactivity is to generate greater involvement or engagement with content (Sundar 2007). Most, if not all, modern-day interfaces are interactive, empowering the user to take action in highly innovative and individualized ways. Interactivity influences user by increasing/decreasing perceptual bandwidth, offering customization options and by building contingency in user-system exchanges. These factors combined contribute in different ways to user engagement in terms of cognition, attitude, and behavior.

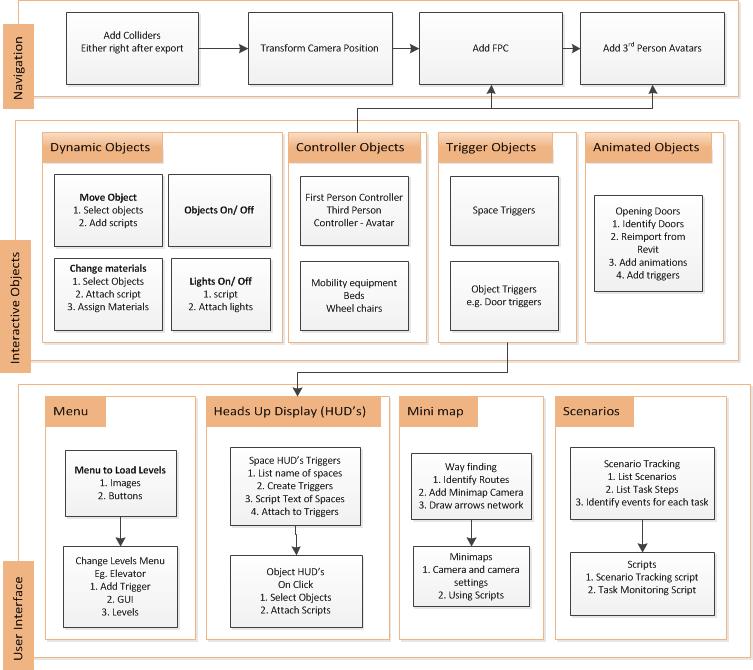

Some interactive features and functionality were designed, developed and tested for the EVPS within the Unity game engine. Figure 4-13 represents a framework of interactivity features that were developed and tested for implementation in healthcare facilities. The framework is flexible and can evolve so that more features can be added in the future. Features developed include different modes of navigation, interactive objects and user interface. The user interface also includes a scenario tracking system that enables end-users to keep track of activities that they perform in the EVPS. The following section describes these features in detail.

4.5.1 Navigation

For real-time architectural visualizations, there is often a need to have moveable objects and navigation through the virtual prototype. Architectural walkthroughs are usually non- interactive flythrough videos that give a virtual tour of a facility to the user by displaying pre- choreographed views of the design. Real-time rendering applications enhance user interactivity by supporting several navigation modes, such as Walk, Fly, Examine, etc. and almost all applications support the walk mode for architectural walkthroughs and game design.

In most applications that use interactive 3D graphics as a platform, this navigation can be portrayed either from a first person point of view (PoV) or a particular character’s point of view. Using the Unity game engine, feasible ways of incorporating both modes of navigation can be explored along with providing greater customized camera control for the user.

4.5.1.1 Camera movement

Observer viewpoint and movements can include turning and changing direction very easily. The observer can spin around quickly and look very closely at any feature of the model. Attaching a camera to an object that can be controlled makes it possible to explore the facility through the first person PoV. If the camera is attached to a character controller or avatar with the camera behind the avatar, it gives the appearance of a third person PoV. Both navigation modes can also be combined if the camera object itself is controlled through user input.

4.5.1.2 First Person Controllers

The first person character controller comprises of a capsule geometry, a camera attached to view the scene and a script that enables motion on user input. Some of the variables that can be controlled and modified by the user include speed, rotation, camera view rotation, and jump. In a first person controller, the camera is the user’s point of view, so making it follow another object around the scene (in case of third person controller) is not required. The user controls the variables and attributes of the camera object directly. First person cameras are therefore relatively easy to implement. Within the standard assets in the Unity Game Engine, there is a first person controller that can be dropped into the scene or hierarchy window. While navigating, the character controller will not be able to go through objects that have the Collider component attached due to collision detection of the underlying Unity physics engine.

4.5.1.3 Third Person Controllers

After the character or avatar is imported into Unity as an asset, character controllers and other scripts can be attached to enable third person navigation. Usually a camera is attached a set distance behind the character controller within the hierarchy in a parent-child relationship. This enables the user to view the facility prototype through the camera that constantly follows the third person controller. Figure 4-14 shows an example of third person controller developed for a healthcare facility project.

Since developing end-user character avatars in 3D modeling applications and animating them can be very time-consuming, a website that develops digital content called Mixamo (http://www.mixamo.com/) was used that allows downloading of custom characters with required character animations that include walk, sit, idle and many more character motions that can be used during the review of a virtual facility prototype. The website also allows custom characters created and developed in other 3D modeling applications to be uploaded on the website to attach animations.

4.5.2 Interactive Objects

Interaction with elements in the model can be classified into dynamic, controller, trigger and animated objects based on how they are interacted with and how they react or behave on interaction. In dynamic objects, attributes of rotation, position, scale and appearance of objects can be modified in through addition of certain properties, components or custom scripts. Almost all the elements of the model can have colliders so that on navigation, users don’t walk through them and the floors or ground plane can have gravity so that user doesn’t fall through the floors. Moveable objects can have physics applied to transform their position, rotation or scale. Custom scripts with options of different colors, materials and texture can be used to change the appearance of certain objects. Lastly scripts can be used for obtaining contextual information about the object on interacting with them either through clicking or any other chosen mechanism.

4.5.2.1 Controller objects

A good example of controllers is the first person or third person point of view (PoV) navigation mode where the capsule or avatar with an attached camera is controlled to move within the prototype through the user’s input. Other objects such as wheelchairs, mobility devices and patient beds can also be attached controller scripts so they are moved around the facility.

4.5.2.2 Trigger objects

Triggers are invisible components, which as their name implies, trigger an event. In Unity, any Collider can become a Trigger by selecting its “Is Trigger” property and setting it as true in the Inspector window. While navigating through the facility, users can pass through doors that open or move a trolley or wheelchair from one space to another. This interactivity in the virtual prototype can be achieved by adding animation to doors that can swing or slide open and physics to objects that move when force is applied to them by another object such as the character controller in the game. Alternatively, this movement or animation can also take place with mouse clicks or triggers. Triggers could also take form of objects that enable an action to take place on either proximity or clicks or certain keyboard commands.

The Unity game engine is set up to show visual assets, however, these also have to be connected to each other to provide the interactivity expected in the virtual prototype. These connections displayed in object hierarchy tree format are known as dependencies. Objects have a parent-child relationship where any changes made to the parent object also affect the sub-objects attached. Objects can also be connected to other objects through scripts so that events on one object can trigger effects on a second object. The result is that your assets are tied to each other with myriad virtual bits of string scripts tying them all together to make a real-time gaming environment.

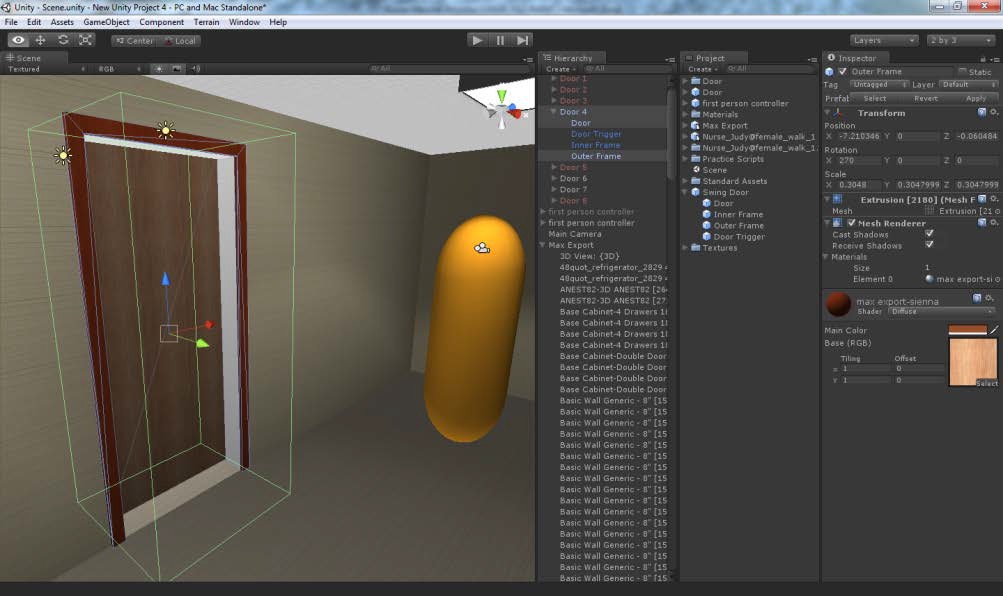

4.5.2.3 Automated Objects

A user can usually walk past the door in a facility model, since the door does not have a Collider component attached to it. However, in real-time navigation of a virtual facility prototype, the ability to make the door swing open can increase the level of realism and experience of the user reviewing the facility. This can be achieved by adding an animation on the door object in the Unity game engine that enables it to swing open. Additionally, to ensure that the door only opens when a user approaches it, triggers can be used, either for detecting proximity of the character controller or through some user input. An invisible collider trigger object of the required dimensions can be superimposed on the door object such that when the user collides with the trigger object, it enables the door animation to play and the user can walk between spaces once the door has swung open as shown in Figure 4-15. On repeated trials to make the door swing open, it was realized that the door and doorframe were combined as a single object and it was not possible to split them within Unity. Thereafter, it was realized that to simplify the incorporation of swinging doors in the virtual facility prototype, it was necessary to split the door frame and door panel in the authoring application such as Autodesk Revit Architecture, where the door family is edited to split and create two distinct door objects- the door and the door frame.

4.5.3 User Interface Development

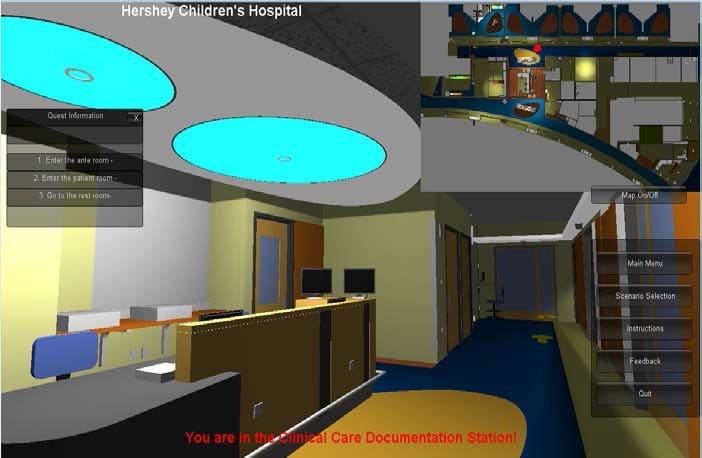

A Graphical User Interface (GUI) represents the information and actions available to a user through graphical icons and visual indicators. Based on the storyboards developed during system design phase, menus, icons and buttons can be developed that have interactivity scripts to enable various actions to take place in the prototype. These actions include loading specific spaces, avatars models, loading websites and keeping track of scenarios of tasks performed by the user. The user interface can also be used for providing context awareness and other related information to the end-user through the use of heads up displays and mini-maps. In Unity, the GUI system is called UnityGUI, which allows the creation of a huge variety of GUIs complete with functionality quickly and easily.

4.5.3.1 Menu

A start menu is developed to launch when the virtual prototype is opened. Similar to video game development, start menus can enable the user to change options and enter levels that contain the virtual prototype of the facility (Kumar et al. 2011). The start menu for the virtual prototype of the facility can generally be saved as a separate scene with buttons or textures that allow the user to choose which spaces they want to explore or what role they want to choose.

Based on their choices, different levels or Scenes are loaded in the Unity application for the user to explore. Figure 4-16 shows an example of a start menu with interactive icons from a healthcare related project.

Figure 4-16. Start Menu with interactive buttons to load levels and change options.

4.5.3.2 Heads-Up Displays

The heads- up display (HUD) is a feature used in video games that provides live, constantly updated information such as scores for the user. In virtual prototypes developed in Unity, textual information can be customized and displayed based on requirements analysis. The HUD can be used to display names and other information on specific objects when they are clicked. Additionally, when the controller object navigates and collides with trigger objects placed in different areas, HUDs can display the specific name and information of that space.

4.5.3.3 Way-finding

Studies show that the use of “you are here” maps aid in spatial cognition by using abstract representations of the large-scale environment to provide information on orientation and guide way finding (Dutcher 2007). A mini-map with tracker to is used locate position of the user in real-time within the virtual prototype and aid in way finding and spatial awareness (Klippel et al. 2010). Using an orthographic camera to view the prototype from the top plan view, the mini map is placed on the user display according to the design and storyboard requirements. Figure 4- 17 shows that both HUDs and mini-maps provide customized information about the environment to the user exploring the virtual facility prototypes.

4.5.4 Scenario Scripting

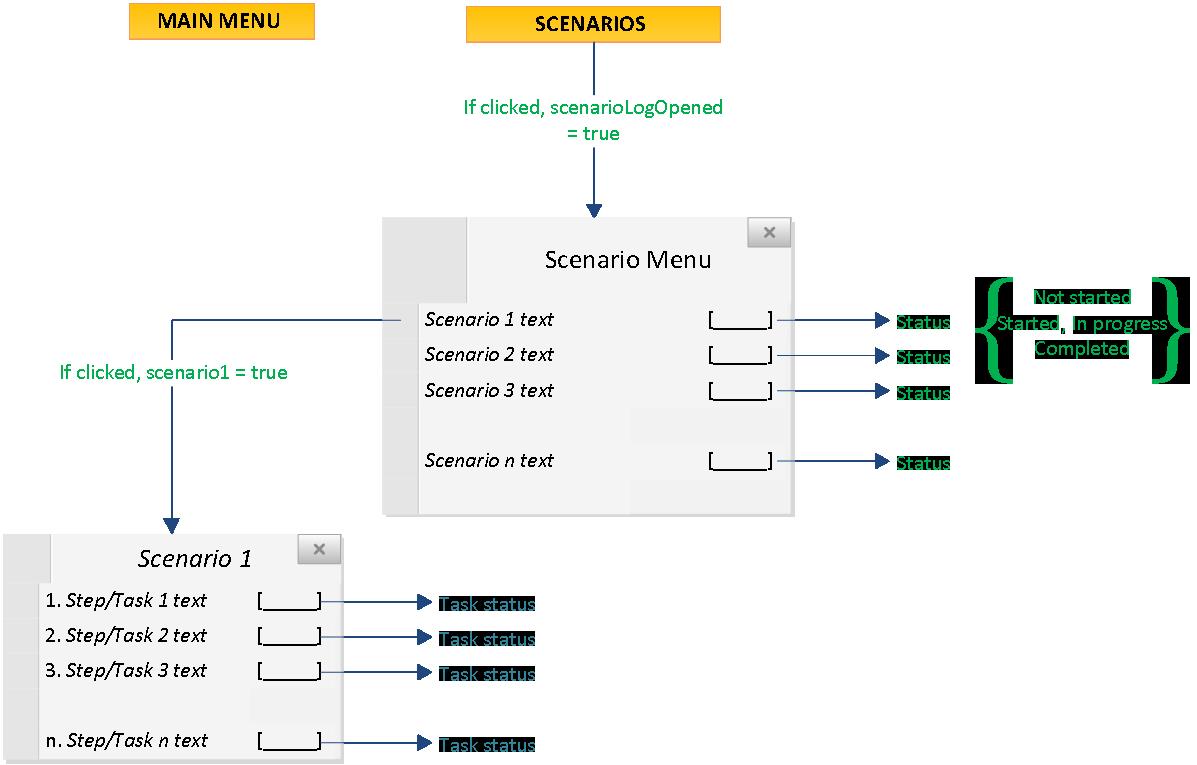

The concept of quests and level design in video games provides players with objectives and guide gameplay (Smith et al. 2011). Taking cue from quest design that uses a tracking mechanism to monitor the progress of players within their quests, task-based scenarios embedded in the virtual prototype also use the same approach for keeping track of how the user explores the prototype. Figure 4-18 shows the approach for scripting the scenario menu.

Figure 4-18. Scenario Menu and Scripting approach.

To manage the scenario tracking process, two scripts have been developed–the scenario monitoring script and the task monitoring script. The scenario monitoring script observes which step of a given scenario the user is on and ensures that proper instructions are displayed on the user interface. The task script is responsible for checking to see if the user (controller object) is interacting with the correct object (dynamic, trigger or automated) at a given step of a specific scenario. Each game object is assigned a unique identity, and the task monitoring script is applied to each object via a simple drag and drop interface in Unity 3D.

The Task Monitoring Script is governed by three chief variables defined within its code and a series of if statements that determine what step of a scenario task the user is on (Table 4-2). When certain conditions have been met, the script allows the user to progress to the next step in the task. All three of these variables are also passed on to the Scenario Monitoring Script, which is responsible for displaying the current task information on the user interface.

Table 4-2. Scenario Tracking script with its variables and functions.

With reference to the three variables from Table 4-3 (below), a set of three if statements must be modeled in code for every possible step of a scenario task. If any of the three if statements are false, then the step is incomplete and the user cannot progress to the next task. In summary, each of these if statements analyze the following conditions:

1. Has the user completed all of the prerequisite steps prior to the current step?

2. For a given step, is the user interacting with the correct object?

3. Has the user completed the current task step?

If each of these three conditions are met with the code equivalent of a ‘yes,’ then the value of the Step Count variable is increased by one, signifying that the user is able to progress to the next step in the scenario task.

The next section describes the overall process for experience-based virtual prototyping, and discusses process validation, framework for developing reusable model content and strategies for development.

4.6 PROCESS VALIDATION

In the final step of the process, the framework for structuring scenarios and the information architecture for the experience-based virtual prototyping system was validated. Validation of the EVPS development process was achieved through initial testing of the design and concepts in various project cases related to healthcare facility design as well as informal interviews of industry professionals and subject matter experts with the required domain-specific knowledge on developing applications using game engines. Revisions were made to the framework based on the expert feedback and the required changes were implemented in the development process of the Experience-based Virtual Prototyping System (EVPS).

Throughout the application development process, the research intent was to constantly assess the capabilities and limitations of the programming environment and gaming engine used as well as the procedure employed to develop the scenario-based interactive virtual environment for end-user testing as a part of internal validation. Some of the strategies identified for rapid virtual prototyping were the use of reusable model content and determining the level of effort required for EVPS development.

4.6.1 Framework to rapidly develop reusable model content

There is a significant amount of time-consuming effort required in developing model content and transferring it using adequate file formats to work in the required virtual environment outputs. For the facility model, Leite et al. (2011) discuss modeling effort associated with generating building information modeling (BIM) at higher levels of detail (LoD). Most of the times the BIM of facilities are not model to the adequate LoD and often other interactive objects are either not modeled to the LoD desired or not modeled at all. Modeling other interactive objects required based on specifications can be very time consuming and not worth the effort or use of resources.

Digital Content Creation (DCC) vendors online can be used for accessing 3D model content. Access to DCC websites and other resources can develop faster design development cycles. Some of the examples are avatar websites, Google Sketchup warehouse, Unity Asset Store, Mixamo etc. Digital model content can be combined with interactive behaviors and packaged for reuse amongst other healthcare or related projects.

Examples of packages include a nurse avatar with zoom and rotate camera, moveable patient beds and medical equipment, scripts for custom menus and widgets, avatar on mobility device and doors that swing open. Figure 4- 19 shows an example of a reusable model content where an avatar of an elderly person was designed and downloaded from the Mixamo website, digital model of a mobility device was supplied by the scooter manufacturer’s company and customized controller and moveable object scripts were applied to create a package that can be used between different virtual prototyping projects.

++++

[Publisher’s note: Dr. Kumar told the publisher that he is the model for the avatar shown here.]

++++

Finally, an element library can help organize and store developed content and make it available for use in later projects. The objective of developing this library is to gradually grow the amount of reusable model content and make it available on open source websites for use. Taking inspiration from a similar effort, Dunston et al. (2010) are working with the Technology HUB initiative that leverages cyber infrastructure to share resources for design of virtual healthcare environments. The intent was to let partners across universities share 3D model content of objects such as furniture and medical equipment along with computer code to utilize these models in their respective projects.

4.6.2 EVPS Development Strategy

The scenario framework from requirements analysis phase and the system architecture from the system design phase can be used together to develop a strategy for implementation and development of EVPS on healthcare projects. A template strategy plan (Appendix A) for experience-based virtual prototyping was created to aid in the EVPS development. The EVPS plan proposed helps identify specifications and modeling requirements by asking the following questions related to model content and media used for interactivity:

Model Content

- · What elements and properties should be included?

- · What is the required level of detail?

- · What is the required level of realism, or abstraction?

- · What the required level of interaction with end-users?

Media Used for Prototype Interaction

- · What display system(s) are to be supported? E.g., desktop, large immersive display

- · What interactive devices will be used? E.g., mouse, joystick, data glove

- · What display resolution will be supported?

- · Will you aim to support stereoscopic visualization, or surround sound audio?

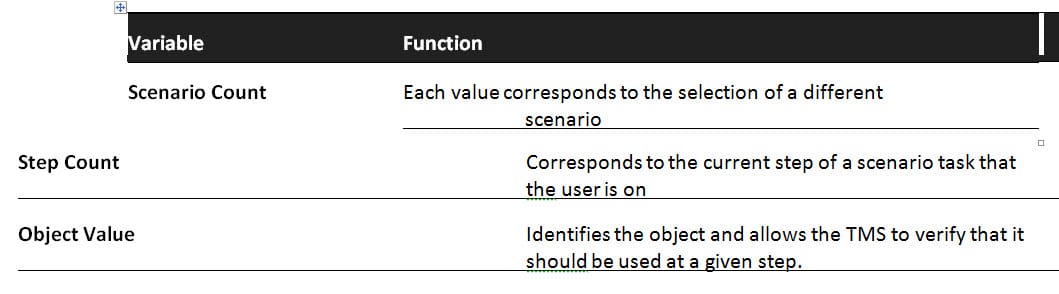

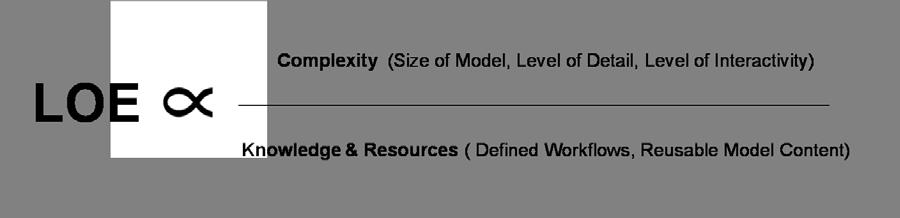

Based on answers to the above questions on amount and type of model content required for development and selection of media for interaction, detailed steps for the EVPS development can be laid out. The EVPS development process was implemented on various healthcare projects to determine challenges, resources and time-consuming tasks required to accomplish the virtual prototyping requirements. Figure 4-20 shows a conceptual representation of the level of effort (LOE) required for EVPS development, which increases with the complexity of the model content, level of detail, level of realism and level of interactivity desired.

However, repeated testing of the EVPS development process on various projects also indicated that leveraging reusable interactive model content; clearly defined design information workflows and greater knowledge or experience with virtual prototyping process may reduce the level of effort required.

During EVPS development, it has been important to “prototype the prototype,” to ensure that the knowledge and resources available can sufficiently meet the requirements set during the requirements analysis phase. Based on the challenges and limitations encountered during initial development, requirements and specifications can be revisited and negotiated based on available resources. To illustrate, Table 4-3 shows an example matrix for specifying the requirements while comparing them with the resources available to be able to effectively scope out the development process. Based on the end user specifications, the level of effort required for each aspect of model content and level of interactivity can be mapped.

The example in the table shows that the EVPS specification for a specific project may require low level of effort in model content detailing and lighting but medium level of realism with textures for a large size model. Similarly, from the interactivity standpoint, low level of effort will be required for the movement-based scenario and navigation point of view but medium level for interaction with objects and graphical user interface. This matrix can help determine the complexity of the EVPS for any specific project. Then, based on the developer’s knowledge, experience, and accessibility to available reusable content and EVPS development workflows, level of effort or time taken for EVPS development can be determined.

Table 4-3. Matrix of example specifications mapped based on level of effort.

4.6.3 Experience-based Virtual Prototyping Procedure

This research activity was focused on refining, improving and expanding the procedure to rapidly convert design models from BIM authoring tools into the virtual prototyping system, while incorporating task-based scenarios. Figure 4-21 shows the overall experience-based virtual prototyping procedure that gradually emerged from iterations of developing virtual prototypes using various case study projects as well as methods adapted from other virtual reality system implementation studies (Wilson and D’Cruz 2006; Wilson 1999).

As discussed throughout this chapter, the steps for developing the EVPS include 1) requirements analysis, 2) system design, 3) system development and 4) system validation, and finally 5) implementation on a project. Requirement analysis encompasses identifying stakeholders, establishing goals, and undertaking user needs analysis. Framework of scenarios proposed in Section 4.2.3 can be leveraged to identify model content and interactivity features required.

Second phase of system design begins with concept design through storyboarding followed by use of system architecture to identify appropriate programming environment and media for interaction. Strategies for development and EVPS development plan (Appendix A) can also be used to detail out steps for development. The third phase of system development includes transferring BIM and other digital model content into programming environment and incorporating interactive features and functionality in the EVPS.

Finally, the system and process can be validated and lessons learned can inform the development process until the EVPS is ready for implementation on a healthcare facility project for end-user design review.

Figure 4-21. Experience-based Virtual Prototyping procedure.

4.7 SUMMARY

This chapter described the procedure for development of experience-based virtual prototyping systems. The steps for EVPS development included requirements analysis, system design, system development and finally system validation for implementation. Several project examples were used to illustrate and test these processes. Finally strategies for rapid EVPS development were proposed and the overall EVPS procedure was presented. The next chapter will introduce the Hershey Children’s Hospital case study where the EVPS process is implemented and assessed.

++++

Copyright 2013 by Sonali Kumar. All rights reserved. Thesis published on this site by the express permission of Sonali Kumar.

Note: Under construction link to Chapter 5.

++++

Copyright 2013 by Sonali Kumar. All rights reserved. Thesis published on this site by the express permission of Sonali Kumar.